7 behavioral insights tips from pioneering cities in the field

Just a few years ago, it would be hard to find anyone working in local government who knew much about behavioral economics. The idea that City Hall can test out ways of “nudging” residents to, say, pay off overdue utility bills or sign up for preventive health screenings, is still pretty new.

But the practice is starting to take hold — and it’s delivering results. Those results have been fueled by What Works Cities, a Bloomberg Philanthropies initiative that has helped 100 U.S. cities boost their use of data and evidence to improve outcomes for residents. Cities have used behavioral insights to boost payment of back taxes, recruit a more diverse police force, and improve trash collection, among other things. Using behavioral insights is one of the most important tools a city can have in its innovation toolbox.

A partner in most of these efforts so far is the Behavioral Insights Team, or BIT, a U.K.-based social purpose company that helps cities develop and evaluate ideas for improving government services. Over the past three years, BIT has launched 99 experiments in 37 U.S. cities as part of the What Works Cities program. These are typically low-cost but rigorous experiments that use randomized control trials to test whether tweaks to letters, emails, text messages, and other city communications with residents produce a measurable change in how people respond. It doesn’t always work. But when it does, cities can scale up those strategies knowing that it will pay off.

To take stock of what cities have learned from doing this, Bloomberg Cities talked with Michael Hallsworth, BIT’s North America Director, and Sasha Tregebov, who manages the team’s U.S. engagements under What Works Cities. We also spoke with several city leaders across the U.S. who have been running behavioral experiments both with BIT and on their own or with other partners. Here are seven themes that emerged.

1. There’s value in testing and learning

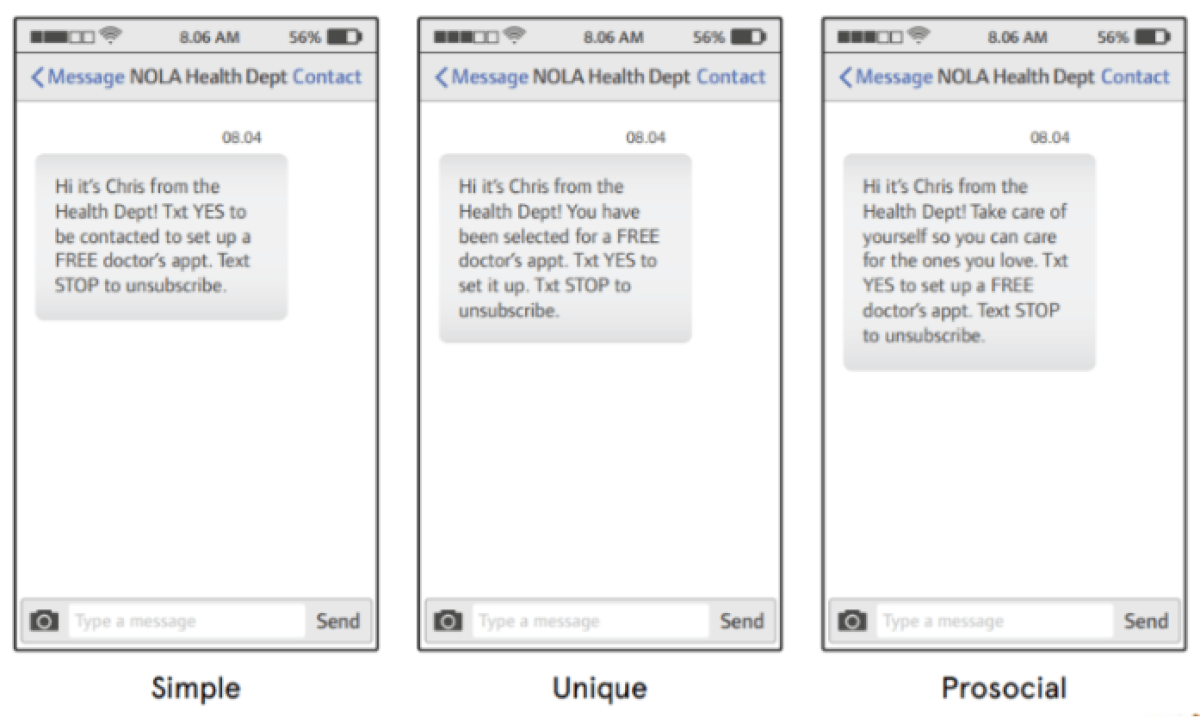

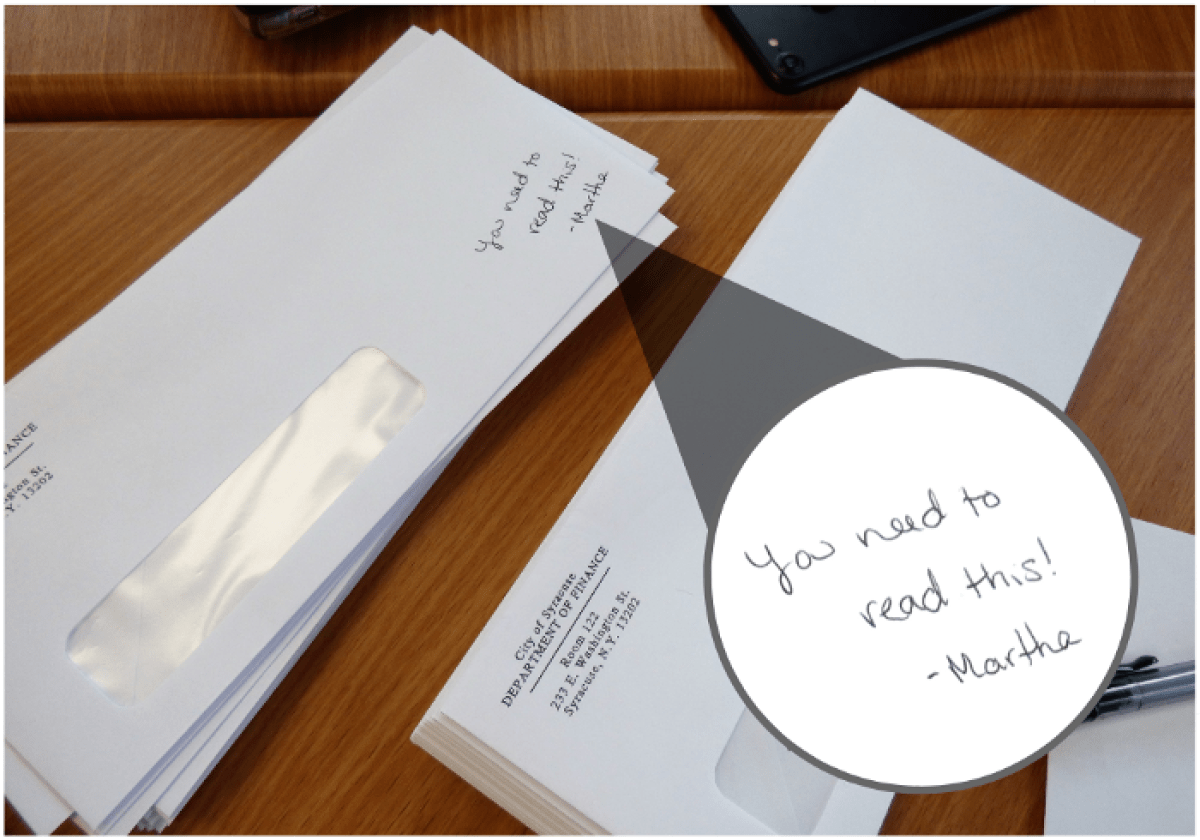

The City Hall success stories are starting to pile up. Syracuse, N.Y., saw a $1.5-million jump in payments of property taxes owed after mailing out letters with a handwritten message on the envelope. Chattanooga, Tenn., found dozens more people of color applying to become police officers after testing new messaging on its recruitment postcard. New Orleans found residents eligible for free health care were 40 percent more likely to see a doctor after receiving a text message saying they had “been selected for a FREE doctor’s appt.” More case studies and use cases are in the BIT report, “Behavioral Insights for Cities.”

“We’re trying to get marginal shifts in behavior that are cost effective,” said Hallsworth, noting that strategies shown to work can be scaled up. “So even relatively small improvements can be quite successful.”

James Wagner agrees. As Chief of Performance Strategy and Innovation in Tulsa, Okla., he worked with BIT on two behavioral trials. One produced a 15-percent boost in the number of people paying off traffic fines after receiving a text message from the city reminding them when their payment is due.

“The takeaway for me was to test something before going all-in on a strategy,” Wagner said, noting that the text-messaging trial cost just $100 to run. “It might not have worked. We could have easily just assumed it would work, and before we’d know it, we’d be $15,000 down the road with a vendor and not know if it works or not.”

2. There’s no such thing as failure

Not every trial produces a clear win. A number of cities have shared the same experience: They put a lot of thought into redesigning an archaic government letter, for example, only to find through testing that the new letter makes no difference. Sometimes, they even find the old letter works better.

That can be frustrating, said Katie Shifley, a senior financial analyst in the Portland, Ore., budget office. Portland has run a dozen trials with eight different departments, some of which panned out, and others that did not. Still, the act of thinking through what evidence matters, and designing an experiment to find it, is always worthwhile. “Even when we get null results, we’re seeing the value in just sitting down with program staff and creating the space for thinking about how they can improve their service to the public,” Shifley said.

Adria Finch agrees. As head of the Syracuse innovation team, an in-house City Hall consultancy initially funded by Bloomberg Philanthropies, Finch has led a number of behavioral experiments. The recent one targeting back taxes paid off big; others did not.

She thinks that’s just fine. “We always learn something from these experiments,” she said. “We’ll keep doing them, and we’re OK with an occasional failure. That’s the cool thing with these: It’s not a huge investment. We might as well try. If it works, amazing! And if not, then we move on.”

3. Look for low-hanging fruit

The earliest city experiments with behavioral insights have focused on letters and other forms of communication with residents. There’s good reasons for that, Hallsworth said. First of all, cities send out loads of letters. Second, tweaking their language or graphic design doesn’t cost much. And third, it’s relatively easy to measure whether those changes had any impact. “You tend to get results fairly quickly,” whether you’re looking at utility bills, code enforcement, or other kinds of revenue collection, he said.

“These things aren’t glamorous. But they’re important to keep cities going. And we find them quite good proving grounds for this approach.”

Getting some quick wins is important. It helps skeptical agency staff see the value in doing experiments that take additional time and effort. “You have to remember, integrating behavioral insights in local government is a change initiative,” said Brent Stockwell, assistant city manager in Scottsdale, Ariz. “With any change initiative, it takes time. You need to look for the smaller wins to get to bigger wins.”

4. Leadership support is essential

Because this work is so new to local government, the staff doing it need the mayor, city manager, or others at a high level to be champions. Those leaders don’t need to know how to run an A/B test. But they do need to articulate a clear vision for why using data and evidence is important. They also need to provide cover for those leading the charge, and set an expectation for cross-departmental cooperation.

“This is really cutting edge,” said BIT’s Tregebov. “It’s not just accelerating efforts that were already happening in cities. It’s creating an entirely new way of working. So having a mayor stand behind that and say, ‘We’re committed to learning what works and doing what works’ — that can help resolve a whole bunch of issues you can get into in the day-to-day of running these projects.”

5. Someone needs to own responsibility for using behavioral insights

Cities won’t be able to scale up this approach without staff who know what they’re doing. Ideally, those people are also positioned within City Hall in a role that works across many departments, such as in an innovation team or the city manager’s office.

[Read: Behavioral science is quietly revolutionizing city governments]

In Scottsdale, the team leader for behavioral insights is Cindi Eberhardt, who has earned the nickname “BIT Ninja Warrior.” After partnering with BIT on several trials and a two-day training for more than 20 employees, Eberhardt set up teams within different departments to push this work deeper into the organization. Representatives from each department meet monthly to discuss what they’re learning.

“You have to have structure and regular activity,” she said. “Even though it has the blessing of the city manager’s office, this work is not in anybody’s work plan. People are very busy. And it’s not yet embedded in our DNA to do this.”

6. You don’t need a Ph.D. in statistics — but you do need expertise

The testing model BIT uses to train city staff is relatively simple and low-cost. Still, data chops are required — more than you’ll find in many local administrations.

“It gets really complicated really fast,” said Portland’s Lindsey Maser, who’s worked on several behavioral projects. “Even if you can find someone at City Hall who has the expertise to help you design a randomized control trial, that’s not their job.” What Portland needs, Maser said, is a dedicated staffer who can act as an internal consultant within the city — someone who can not only help with the technical aspects of designing trials and analyzing the results, but also to keep up on the latest research on behavioral insights.

[Read: Making revenue collections more effective: lessons from a Nobel laureate]

This is one area where cities may want to look outside city hall for partnerships. For its successful back-taxes project, Syracuse partnered with the Maxwell X Lab at Syracuse University, whose mission is to help the public and nonprofit sectors apply behavioral insights to their work. A local foundation provided the financial support.

7. Engage your information technology and legal departments

A common obstacle cities face in this work is their own data systems. Clunky databases make it difficult to analyze whether an intervention worked or not.

“One of the lessons we’ve learned is to bring IT into the conversation early,” Tregebov said. “Early on in our work through What Works Cities, we kept having the trouble of saying, ‘Oh, we’ll get the data after we design the intervention and the experiment. And the IT people would look at us and say, ‘We don’t have this data! This isn’t how the system works!’”

The same goes for legal. In Syracuse, efforts to test out new designs and language for certain letters hit snags with the Law Department, which cited concerns about residents hearing differing messages from the city. “It’s important to have an open dialogue with your legal team and ask them what they’re comfortable with and if they have any tips,” said Finch. “If you involve them in the beginning, it’s better in the long run.”